Stopping Phishing Attacks and Socially-Engineered Threats from ChatGPT

What’s the difference between a tool and a weapon? It’s all about intent. What someone uses for creative purposes can also be used for malicious purposes.

Consider generative AI, which includes popular technologies like ChatGPT and Google Bard. Most people are excited by these technologies for their ability to quickly produce text, images, and coding when prompted. Drawing from vast data sets, these tools synthesize already existing information to generate interesting and even practical responses.

But generative AI can also be weaponized by cybercriminals for phishing attacks and social engineering scams. It’s time to shine a light on the dark side of generative AI—so your organization can protect itself against bad actors.

The Dark Side of Generative AI

Email is a fundamental communication channel for businesses. But it’s also a prime vector for cybercriminals who convince targets to share sensitive information.

Phishing attacks, for instance, are nothing new. Bad guys disguise their attacks as legitimate emails to steal sensitive information from their targets. Employees are trained to identify red flags including spelling errors and grammatical mistakes. But detecting malicious emails is more difficult if attackers use tools like ChatGPT or Bard to generate high-quality email copy, free of typos and grammatical errors.

Admittedly, some generative AI companies have built-in safeguards against this activity. ChatGPT won’t engage with users who explicitly prompt the tool to “generate a phishing email.” But with a little finesse, bad actors can ask the tool to produce a professional email from a real-world brand urging the contact to reinstate their account by clicking the link below. An unfortunate employee looking for the traditional red flags might fall for this AI-aided attack.

Cybercriminals can also use generative AI to research their targets and thus make their email-based attacks more persuasive. Social engineering attacks rely on convincing targets to take specific actions, such as sharing sensitive information or transferring money. By pulling relevant information about a company’s organizational structure and other public information, attackers can tailor their messages to better match target expectations.

What a ChatGPT Attack Looks Like

During a recent live demonstration, FC or Freakyclown, an ethical hacker and CEO at Cygenta, showcased how a hacker might use ChatGPT to write a convincing phishing email.

FC starts by showing some dead ends in the tool. ChatGPT won’t generate a malicious email when prompted to do so. Instead, FC bypasses this safeguard by asking the tool to write an email from the perspective of a vendor whose bank account was shut down. The target is urged to share invoices with a new account number. With only a couple of prompts, FC gets the AI tool to produce an urgent and succinct phishing email—one that would easily trick the average end user.

“This is a force multiplier. A hacker could take that same information and write that email. But this is done in five seconds and is more effective,” says FC. “This is even more powerful if you have samples from your victim and how they speak. You can impersonate them more accurately.”

By feeding in a sample email from “CEO John Smith,” FC tailors an email to more accurately mimic the voice of a legitimate sender.

Interestingly enough, ChatGPT can identify the red flags in its own email copy, which it did when prompted to explain why the email it had just generated may be trying to socially engineer its recipient.

In this way, hackers can go beyond the basics of malicious email creation and use generative AI as an iterative process to improve their capabilities. Examples include making red flags less obvious, anticipating responses from victims, and making the tone more convincing.

In that same vein, generative AI tools help hackers research targets in an instant. Asking the tool to list the executive team at a company and list their alma mater is much quicker than finding that information via LinkedIn. And because generative AI has this capability, it can be done in seconds—making each email more tailored than the last.

These are just a few examples of how cybercriminals can use generative AI. Like FC stated, we are still in the very early stages of this technology and can’t fully anticipate what the consequences will be. But that doesn’t mean the fight is lost. There are actions your business can do to protect itself against email-based attacks.

Protecting Against Generative AI Email Attacks

It’s important to remember that the attacks themselves are not novel. It's just how cybercriminals generate those attacks that’s leaping forward. The volume and scale are changing, but the mechanisms themselves are not.

Recognizing this, there are a few things to do.

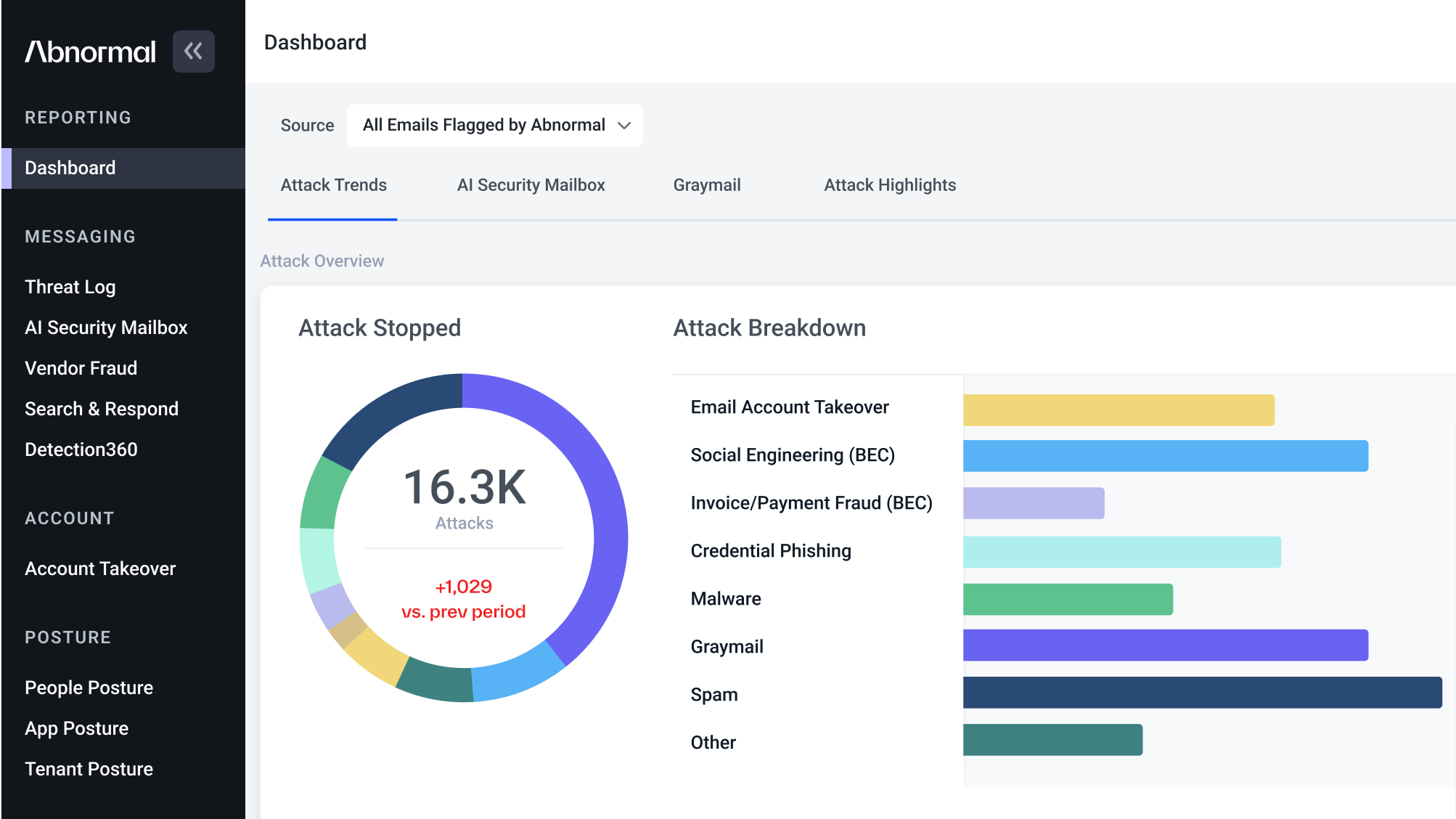

Double down on your enterprise email security. You need AI to fight AI as we move into this new phase of attack. Abnormal Security uses AI and machine learning to detect abnormal behaviors and block attacks before they reach your employees.

Integrate your solutions for better protection. When possible, find vendors who integrate with your current security solutions to provide better security across your environment. For example, the CrowdStrike integration with Abnormal can better detect compromised accounts, as it looks across both endpoint devices and email accounts for anomalous activity.

Continue to train vigilant employees. If social engineering and phishing attacks are becoming more persuasive, then employees need to sharpen their ability to identify suspicious asks. Ensure your employees know about generative AI and how it makes threats harder to detect than ever before.

As the AI landscape continues to change, security leaders must be prepared. And with the continued growth of ChatGPT and similar tools, this need is only going to increase.

For more insight into how these attacks are created, watch our on-demand webinar featuring FC and CrowdStrike: ChatGPT Exposed: Protecting Your Organization Against the Dark Side of AI, or schedule a demo today!

Get AI Protection for Your Human Interactions