ChatGPT Phishing Attacks: You’re Still Protected With Abnormal

OpenAI’s wildly popular chatbot ChatGPT has been all over the news since its release last November. Its ability to pass legal exams, write feature-length articles, and generate working software, has sparked significant conversation about the power of Generative AI. But like most new technologies, ChatGPT comes with its share of both benefits and pitfalls. On the one hand, it can provide increased efficiency, quality, and cost savings. Conversely, this smart tech can be dangerous in the hands of bad actors. This is increasingly poignant as advances like Meta's LLaMA democratize access to this technology.

In this article, we will assess the potential security risks of generative models like ChatGPT, how Abnormal protects you from these risks, and how Generative AI can be used to stay one step ahead of cybercriminals.

What is Generative AI and Why Does It Matter?

Generative AI is a form of artificial intelligence technology that produces content including text, imagery, audio, and synthetic data. The most common examples of text-based generative AI-based tools are chatbots, virtual assistants, and copywriting—including tools like ChatGPT. Less common examples include computer program generation and data report generation.

ChatGPT, specifically, is a natural language processing tool which uses a combination of large language models (LLMs) and reinforcement learning to create human-like conversations and expedite tasks like composing emails, essays, and even code. The benefit in all these use cases—and the benefit of Generative AI overall—is increased efficiency, which yields additional benefits like cost savings, faster results, and in some cases, improved quality.

How Bad Actors Weaponize ChatGPT

While there are several benefits of this new technology, the sophisticated language models create opportunities for cyberattackers to elevate their social engineering scams. Generative AI language models have the ability to easily compose a convincing phishing or BEC email with exact grammar and prompt action, making it extremely difficult for a recipient to distinguish between a safe communication and a malicious one.

Below is an example of a ChatGPT-created email requesting invoice payment that a cyberattacker could employ in an effort to commit payment fraud.

To generate this email, all I had to do was type “create an email requesting payment” into ChatGPT. As you can see, the body and the header of the email are extremely well-written with little to no grammar mistakes. The messaging comes across as very human-like in tone and verbiage.

Many phishing emails generated by bad actors are written in choppy language, missing parts of sentences, and often contain other more obvious indicators of compromise. All of these could likely be detected by traditional security methods, if not by a keen-eyed employee. Needless to say, emails like the one above have a far better chance of slipping by legacy defenses unnoticed and could easily be the foot in the door hackers need to initiate malicious attacks like payment/invoice fraud.

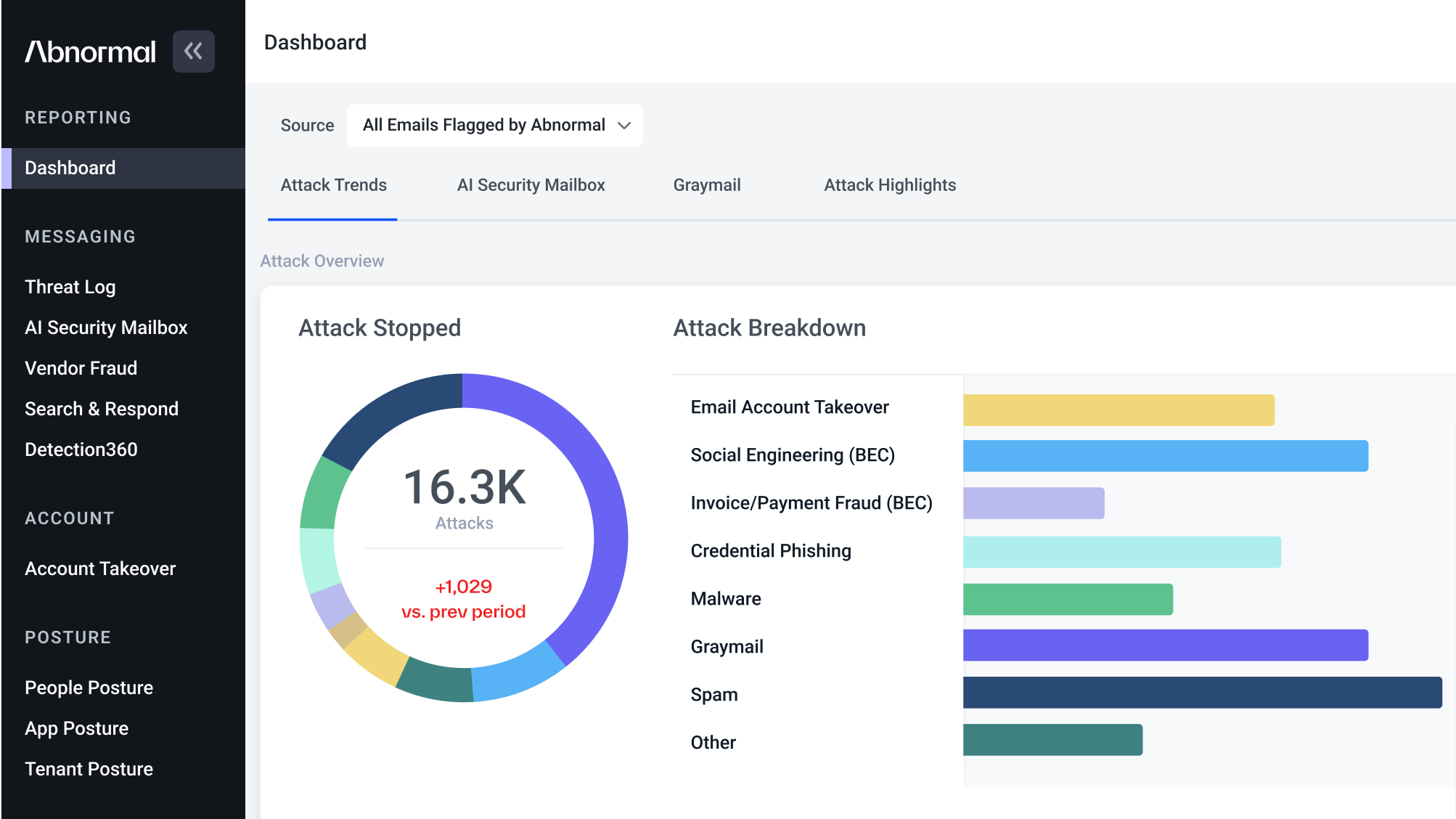

Abnormal Has You Covered

At Abnormal, we have designed our cyberattack detection systems to be resilient to these kinds of next-generation commoditized attacks. By deploying tools like BERT LLMs, the Abnormal solution can easily decipher a threat actor’s social engineering attempts by determining if two emails are similar and are part of the same polymorphic email campaign targeting an organization. These improved detection models allow us to out-innovate cybercriminals and offer our customers the best possible defense against even the most sophisticated attacks.

Using ChatGPT + Generative AI for Phishing Detection

At Abnormal Security, we are always looking for ways to use powerful and innovative new technology to improve our detection capabilities. Generative AI is no exception. For example, we can use large language models like ChatGPT to craft fake phishing and social engineering emails. This extra data increases the resilience and generalizability of our machine learning systems.

However, it’s vital that your organization remains diligent when employing any kind of new generative AI technology. But don’t just take our word for it. Here’s what ChatGPT has to say about the future of AI and phishing attacks:

Interested in learning more about how we utilize machine learning at Abnormal? Schedule a demo today!

Get AI Protection for Your Human Interactions