Stopping New Email Attacks with Data Augmentation and Rapidly-Training Models

On October 21st, 2020, just two weeks before the US general election, many voters in Florida received threatening emails purportedly from the “Proud Boys." These attacks often included some personal information like an address or phone number, threatened violence if they did not vote for Donald Trump, and implied that they had access to the voting infrastructure which would reveal to the attacker how an individual voted. This claim is exceedingly unlikely to be true, but as with other exploitation attacks, once an attacker includes some small amount of personal information, the victim will be more likely to believe these more outlandish claims.

Email security systems did not stop this attack because the pattern had not been seen before. However, Abnormal was able to incorporate the attack into our NLP models within hours to enable detection capabilities for future such attacks.

This story exemplifies one of the hardest challenges of building an effective ML system to prevent email attacks. That is, the rapidly changing and adversarial nature of the problem. Attackers are constantly innovating, not only launching new attack campaigns, but also tweaking the language and social engineering strategies they employ to convince people to give up their login credentials, install malware, or send money to a fraudulent bank account, among other activities.

Retraining the NLP Pipeline

At Abnormal, we tackle this problem by providing our ML models with the most up-to-date information possible. We have both automated systems and security researchers keeping up with the latest attacks. The data gathered is then consumed by a rapidly retraining NLP pipeline.

As a thought exercise, let’s imagine we missed an attack with the following text content:

Subject: Account payment overdue

We haven’t received the invoice payment for invoice #12335. We’ve been trying to contact your accounting department for a month and if we don’t hear back your service will be terminated immediately

Regards,

Oleg

Perhaps our existing text representation did not identify the particular threat of terminating a service, which is a constant challenge as attackers adapt. We would like to immediately re-train our text models to learn this pattern.

However, putting just one sample into the system is unlikely to improve the model enough to catch anything beyond that exact message, even if we use weighting schemes. We would like to learn to detect similar attacks because attackers will be unlikely to use the exact text template in the future. Our solution is to use text augmentation.

For each of these missed attacks, we generate many training samples using text augmentation and use a few open-source text augmentation libraries for this with some of our own changes.

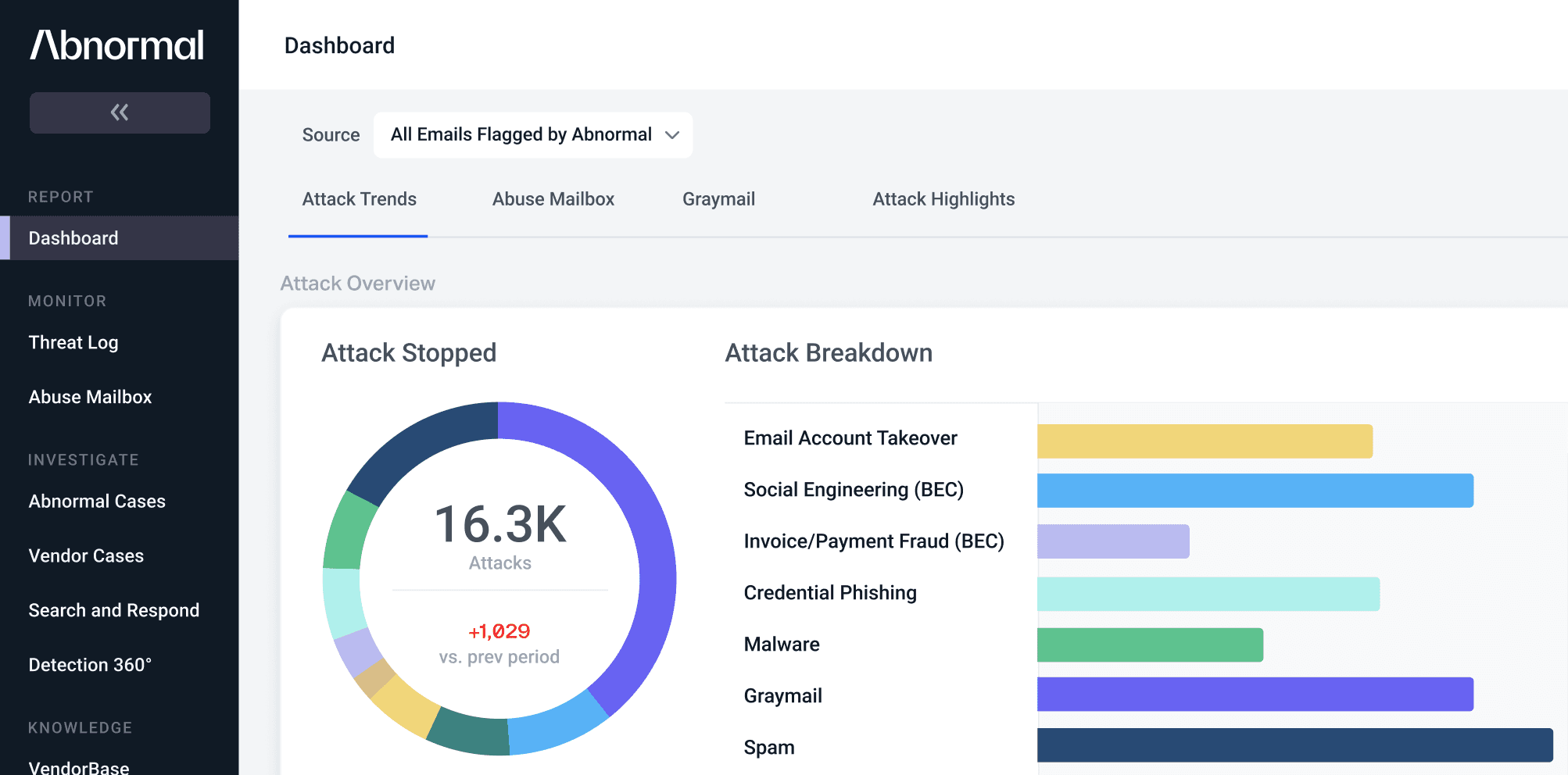

Once we have generated the augmented samples we retrain our model as before. Currently, we use a combination of word and character-level CNNs (convolutional neural networks) to learn to predict various attack labels, such as an attack, spam, graymail, etc. This model is then used directly as an input into our detection stack, and as features in other models.

Some of the challenges in building this system include:

- For new attacks, we often have a very limited set of examples, which are very easily ignored by our model training. It is hard to capture and ensure we have the right level of signal but do not overwhelm other samples using the augmentation.

- It’s hard to maintain model precision because, in the vast volume of legitimate emails, there are often many edge cases that appear similar to attacks.

- We must set up a robust data pipeline to make sure there is always the latest data to train, and that means more robust data pipelines.

- We must find a model structure that is quick to retrain, robust to converge, and expressive enough to learn.

Catching Election Interference Emails with This System

Using this system, we fed in an example of the emails noted in the Washington Post article. After running this message through the trained model, we can verify it is caught with a very high score.

But the open question is whether this model will indeed catch similar, but differently worded, messages. To test this, we can construct a new message and run it through the model.

The model scores this high as well (in this case at 99.6), while our previous model did not score this high at all.

After incorporating this attack into our detection model, Abnormal was able to detect and stop a significant number of other related attacks and spam that used election-related terminology.

If developing machine learning models and software systems to stop cybercrime interests you, we’re hiring! Check out our Careers page to learn more and apply.

See the Abnormal Solution to the Email Security Problem

Protect your organization from the full spectrum of email attacks with Abnormal.